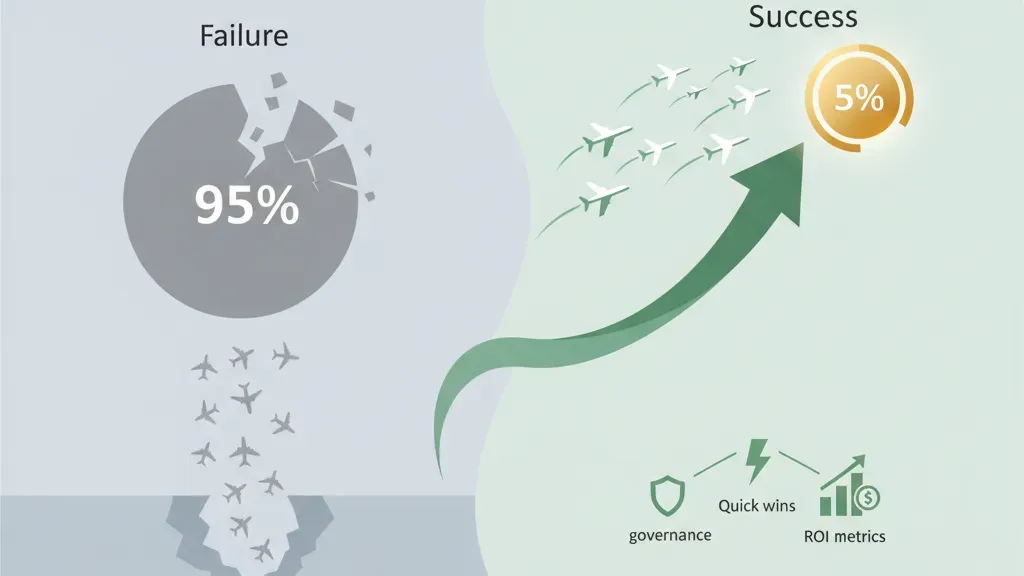

Why 95% of AI Pilots Fail (And How Your Team Can Be in the Top 5%)

Learn why most enterprise AI pilots fail and how a structured 90-day implementation strategy helps digital leaders succeed. Includes governance, quick wins, and ROI metrics.

Why 95% of AI Pilots Fail (And How Your Team Can Be in the Top 5%)

Your board approved the AI initiative. You hired a data scientist. You allocated budget. By month five, the project is stalled, the business owner is frustrated, and you’re explaining why the pilot didn’t deliver.

You’re not alone. According to MIT research from 2025, approximately 95% of enterprise AI pilots fail to move beyond proof-of-concept into production. But here’s what’s counterintuitive: it’s rarely a technology problem.

The issue is implementation strategy.

Most organizations approach AI like they’ve approached software for decades—build in isolation, hand off to operations, hope it works. But AI doesn’t work that way. AI requires organizational alignment, cross-functional governance, clear ownership, and measurable quick wins that build momentum from day one.

This guide walks you through a proven 90-day roadmap to become part of the 5% of companies that actually ship AI and scale it.

The Pilot Trap: Why Enterprise AI Projects Stall

Let’s be honest about what goes wrong.

You launch an AI pilot. Your data science team builds an impressive model—90% accuracy on historical test data. It’s technically elegant. The team is proud. Your board is impressed by the demo.

Then comes the hard part: integration.

The model expects clean input data. Your data isn’t clean. The business process that would use this model involves five handoff points across three departments. No one owns the end-to-end workflow. IT wants control. The business owner wants autonomy. Finance needs to understand the ROI, but the metrics haven’t been defined yet.

By month four, you realize the model works, but the organization isn’t ready for it. The project gets paused. The team scatters to other work. Two years later, you audit AI spending and find dozens of abandoned models.

This happens at companies with billions in revenue. It happens because organizations optimize for individual project success, not for system-level implementation.

The Real Problem: It’s Not the AI, It’s the Organization

Here’s what separates the 5% that succeed from the 95% that stall:

The successful companies redesign their processes around AI before they build the AI.

Most companies try the opposite—they layer AI onto existing processes designed for humans. A mortgage approval process that takes 14 days? Add an AI model to speed it up. But if the process itself is inefficient (seven handoffs, three manual review stages), AI becomes a band-aid. You’re automating a broken workflow.

The organizations in the top 5% start with this question: “What would this process look like if we designed it for AI from the beginning?”

That question changes everything.

It forces you to:

- Identify the bottleneck, not just the opportunity (what’s actually stopping value?)

- Redesign the workflow to require minimal human judgment

- Define success metrics upfront (before you build anything)

- Secure buy-in from the people whose jobs change (the hardest part)

- Set up governance and compliance guardrails from day one (not after a breach)

This is why companies that partner with experienced AI implementation advisors move faster. They’ve seen the patterns. They know which questions to ask before you build.

The 90-Day Roadmap: A Four-Phase Implementation Strategy

Ninety days isn’t enough to build and scale enterprise AI. But it’s enough to:

- Identify the right problems

- Build proof of value with internal champions

- Establish governance and team structure

- Create a repeatable process for the next 10 pilots

Here’s how.

Phase 1: Assess & Identify Quick Wins (Weeks 1-3)

Objective: Identify 1-2 use cases with high impact, low complexity, and clear ROI.

Most organizations want to boil the ocean. “We’ll apply AI to sales, marketing, operations, and finance.” That’s how you fail. Success requires focus.

In week one, conduct a current-state assessment:

- Map the top 5-10 business processes

- Interview process owners: Where are the bottlenecks? Where are people doing repetitive work?

- Quantify the cost of the problem (hours spent, errors made, revenue lost)

- Assess data readiness (what data exists? Is it clean? Who owns it?)

- Identify compliance constraints (regulated data? PII? Audit requirements?)

By week two, you should have a prioritized list of quick-win opportunities.

A quick win typically:

- Solves a real bottleneck (not a “nice-to-have”)

- Relies on data you already have or can easily obtain

- Can show measurable ROI within 90 days (cost savings, time savings, or revenue increase)

- Involves 2-3 departments maximum (not your entire organization)

- Has a clear owner—usually a frustrated business leader who wants the problem solved

Examples of good quick wins:

- Customer support: AI agent that handles 40% of inbound questions using FAQs and knowledge base (reduce ticket volume by 100 per week = 5,200 per year)

- Finance: Automated invoice processing (eliminate 30 hours per week of manual data entry)

- Sales: AI-powered lead scoring (shorten sales cycle by identifying high-fit prospects)

Examples of bad quick wins:

- “Predictive maintenance”—sounds cool but requires months of historical data and engineering changes

- “Next-quarter forecasting”—needs clean historical data and domain expertise

- “Sentiment analysis of all customer feedback”—tons of data, unclear business impact

By the end of week three, you should have one primary use case and one secondary backup (in case the primary stalls). Both should have executive sponsors.

Phase 2: Build the Right Team & Set Governance (Weeks 3-6)

Objective: Establish the team structure, decision-making authority, and risk controls that will keep the project moving.

This phase feels like bureaucracy. It’s not. It’s what prevents rework.

Assemble the core team:

-

Executive Sponsor (from the business, not IT)

- Has budget authority

- Removes blockers

- Doesn’t need to understand AI, but needs to understand the business problem

- Meets with the team weekly

-

Project Lead (owns timeline and delivery)

- Could be IT, could be business—but must have credibility in both worlds

- Reports to the sponsor

- Manages the 90-day roadmap

-

Data Lead (owns data quality and pipeline)

- Not necessarily a data scientist

- Understands what data exists, how to access it, and how clean it is

- Partners with IT on data governance

-

Process Owner (the person whose job will change)

- Knows the current process better than anyone

- Will be your evangelist for the new process

- Must believe the change is good (or find someone who does)

-

Technical Lead (could be ML engineer, could be systems engineer)

- Builds or implements the solution

- Works closely with the business to understand requirements

-

Compliance/Risk Lead (if regulated industry)

- Ensures the solution meets regulatory requirements

- Identifies risks early

This team meets twice per week for 90 days. Not an email chain. Not a status report. A working meeting.

Establish governance on four dimensions:

-

Decision Authority

- Who decides if the project moves to the next phase? (Usually the sponsor + project lead)

- What’s the threshold for killing the project? (Define this upfront)

- How are trade-offs resolved? (Speed vs. accuracy? Cost vs. capability?)

-

Data Governance

- Who owns the data used in the AI system?

- Who can access the results?

- How long is data retained?

- What happens if a breach occurs?

-

Model Governance (if you’re training a model)

- How often is the model updated?

- Who monitors model performance in production?

- What’s the fallback if the model fails?

- How do you handle drift (when real-world patterns change)?

-

Change Management

- Who gets trained on the new process?

- How do you communicate changes to customers (if applicable)?

- How do you handle edge cases or exceptions?

- What’s the rollback plan?

These governance decisions sound dry, but they’re the difference between a project that ships and a project that gets stuck in legal review for six months.

Phase 3: Run a Scoped MVP with Internal Champions (Weeks 6-12)

Objective: Demonstrate value with a controlled group before you scale.

An MVP (minimum viable product) in AI context doesn’t mean “a basic version.” It means the smallest scope that proves the concept works in your environment with your data and your people.

For the customer support example, this might mean:

- Deploy an AI agent to answer questions from 100 internal employees (not customers)

- Connect it to your actual FAQ and knowledge base

- Let customer service leaders see the results

- Let IT troubleshoot integration issues

- Give yourself 6 weeks to iterate before going live

Why internal champions matter:

The people who benefit from this change are your best salespeople. When a frustrated customer service manager says, “This thing just saved me 10 hours a week,” that lands differently than when IT says, “The model has 87% accuracy.”

Identify 2-3 internal champions—people from the business who will be early users, who are vocal in the organization, and who stand to benefit the most. Give them early access. Let them beat on it. Let them show their peers.

By week 12, you should have:

- A working AI system in a controlled environment

- Proof of the value you promised (quantified)

- A list of what needs to change before you scale

- A trained internal champion who can explain the business case to others

Phase 4: Measure, Learn, and Scale (Weeks 13+)

Objective: Take the learnings from the MVP and plan the next phase.

The MVP is not the end goal. It’s proof that the idea works. Now you scale.

But before you scale, you measure:

- Adoption rate: Are people actually using the AI system or working around it?

- Business impact: Did you achieve the ROI you projected?

- User satisfaction: Do the champions recommend it? Are there unexpected frustrations?

- Data quality: Was data cleaner or dirtier than expected?

- Compliance: Did you hit all the regulatory requirements without creating friction?

- Technical performance: Is the system reliable? What breaks under load?

After this measurement phase, you make a decision:

- Scale this use case across the full organization

- Iterate: The idea is right but needs refinement

- Kill it: The idea didn’t work. Thank the team. Move on.

The worst outcome is ambiguity. You have the data now. Make the call.

How to Avoid the Common Pitfalls

Based on patterns across hundreds of AI implementations, here are the things that most often derail projects:

1. Unclear business value

- Problem: You build a model. It’s technically correct. But no one asked: “So what?”

- Prevention: Before you code anything, define the metric that matters (revenue, cost, time) and how you’ll measure it

2. Mismatch between data and reality

- Problem: Your model trains on 2023 data. The world changed in 2024-2025. The model fails.

- Prevention: Monitor model performance in production. Set up alerts for drift. Plan to retrain quarterly

3. Siloed decision-making

- Problem: IT builds the AI. Business wants something different. No one was in the same room.

- Prevention: Get IT, business, compliance, and finance in the same room from week one

4. Scope creep

- Problem: You start with one use case. By month three, you’re trying to do five things. None of them ship.

- Prevention: Kill requests that expand scope. Use the phrase: “That’s a great idea. It’s for the next 90-day cycle.”

5. No change management

- Problem: You deploy an AI system. The people whose jobs change aren’t trained or consulted.

- Prevention: Identify the people affected. Train them early. Make them champions, not victims

6. No rollback plan

- Problem: The AI system fails in production. You have no way to revert to the old process. Customer-facing disasters ensue.

- Prevention: Before going live, define: What’s the manual workaround? Who executes it? How fast can we revert?

ROI Metrics That Matter to Your Board

Your board doesn’t care about accuracy or latency. They care about money and risk.

Quantifiable metrics:

- Headcount reduction (e.g., “Eliminates 2 FTEs annually = $200K savings”)

- Time savings (e.g., “Reduces processing time from 2 days to 4 hours = 480 hours saved per year”)

- Revenue impact (e.g., “Improves lead conversion by 8% = $500K additional pipeline”)

- Cost avoidance (e.g., “Prevents fraud = $2M annual savings”)

- Cycle time reduction (e.g., “Shortens customer onboarding from 5 days to 24 hours”)

Risk metrics:

- Compliance adherence (e.g., “100% audit trail for regulated decisions”)

- Model drift detection (e.g., “Automated alerts if accuracy drops below 85%”)

- Explainability (e.g., “Every decision is traceable to decision rules or data inputs”)

What not to present as ROI:

- Accuracy percentages (nice-to-have, not a business outcome)

- Processing speed improvements alone (only matters if it’s tied to business value)

- “Improved decision quality” without measurement

The board wants to see: We invested $X. We captured $Y in value. Here’s the proof. Here’s the next opportunity.

Making the Top 5%: Partner with Experts

Ninety days sounds fast, but it’s realistic if you have experienced guides. Companies that work with AI implementation consultants move faster because they’ve seen the patterns, they know which corners to cut and which ones are critical, and they have frameworks that compress the timeline.

At Manaira Labs, we’ve guided enterprises through this process—from a struggling pilot to a production AI system with clear ROI. We built Bicara, an AI platform for Indonesian SMEs that demonstrates what thoughtful AI implementation looks like at scale.

We can help you navigate the pitfalls, establish the right governance, and get to your first quick win faster.

Ready to transform your AI implementation strategy? Contact us to discuss your roadmap. We can walk through your use cases, identify quick wins, and set up a timeline that works for your organization.

Or download our AI Implementation Playbook for Enterprises—it includes the 90-day roadmap template, governance checklist, ROI calculator, and decision frameworks that have worked for companies just like yours.

Key Takeaways:

- 95% of AI pilots fail due to implementation challenges, not technology gaps

- Success requires organizational alignment before you build the AI

- A 90-day sprint focused on 1-2 quick wins builds momentum and board confidence

- Governance, cross-functional teams, and change management are as critical as the model itself

- Measure value in business metrics—not accuracy—and scale only what works

Your AI implementation strategy determines whether you become part of the 5% that ships or the 95% that stalls. Start with the right roadmap. Partner with experienced advisors. And focus ruthlessly on quick wins that matter to your business.